This year has been a year of revelations in terms of how we visually perceive the world and whether those new nuggets of knowledge can be reflected in digital art. Even deeper than that, can we use our new knowledge to understand each other, our individual world views, better? And maybe it gives us a better clue about how our brains work?

All of this soul searching was inspired by two important milestones. The first milestone was that Simon and I continuously cross-ignite each other with our explorations of Adobe Creative Cloud, Cinema4D and Unity (I am studying motion design at the School of Motion and Simon’s learning Unity and Adobe Illustrator). The second milestone was taking the time to research more about synesthesia, color perception and color theory. We had known that Neva was a synesthete for years, but didn’t realize how much of her perception is being governed by color. Together the three of us have read Richard E. Cytowic’s compelling work Synesthesia (from the MIT Essential Knowledge series) and discovered many key aspects of human sense perception, guided by Neva’s enthusiastic eye-openers about her personal unearthly experiences with sensory couplings.

Many are aware that color and vision itself only exist in our brains. Isaac Newton, the first scientist to reveal rainbow diffraction, realized this back in 1704 when he wrote that rays had no color. Yet it was jaw-dropping to learn that, according to recent research, the neural network supporting the conscious experience of color in the human brain (V4) can bypass the usual retinal inputs. While right V4 activates when viewing actual electromagnetic waves, left V4 activates in synesthetic brains in response to spoken words, hearing sounds or thinking of graphemes. In simpler language, a blind or color-blind synesthete can see colors with their inner vision that are impossible for them to perceive with their eyes!

Neva often tells us that it is impossible to recreate the diversity of color that she sees in her head and that she feels sorry for our limited color perception, because the number of colors she sees is so much greater than what is available to our eyes. She says many of the synesthetic colors she can observe fall outside of the existing color vocabulary, thus outside of the almost 17 million shades perceived by the cones in our retinas and accounted for in the CIE 1931 color space . This is why Neva isn’t excited about any visual input (like paintings or design) but prefers audio or tactile input that trigger those richer colors in her left V4. Although too much of audio input can also mean overstimulation. In Neva’s case, even though she literally thinks and memorizes things in colors and produces facts from her memory by recalling a color sequence and translating that color sequence back into words or musical notes, traditional visual input (via the eyes) produces the weakest response.

For Simon and I, on the contrary, the way we both infer and memorize information is very visual in the traditional sense: we have to see it, to sketch it out, to write it down to understand it better. Simon has always been fascinated by color. Constantly experimenting with different light wavelengths, paint mixes, aged 5, he actually learned to type by compiling long lists of all the hues he knew in several languages. His later projects included color as a way to express fractions, color wheels, optical illusions in Processing, camera obscura and, more recently, creating a whole CSS color encyclopedia and a color palette generator based on his learning some color theory.

From the research cited by Richard Cytowic, we have learned why conventional color theory is inadequate. “Color is perhaps the best example of how the brain constructs reality rather than passively taking in the world as given”, Cytowic writes. The brain actively seeks what interests it and uses its color apparatus to determine the object’s constant features in an ever-changing visual environment. This is a survival mechanism we have evolved. Color wouldn’t have much biological utility if we perceived a different color every time ambient lighting slightly changed, especially in a moving object. And yet, conventional color theory states that retina’s cone receptors respond to wavebands of red, green and blue light, which would make them responsive to slight changes in lighting. Apparently, what actually happens is that the brain “assigns a stable color to a surface by computing a simple ratio. What V4 does is compare the relative lightness on long, medium and short wavebands reflected from a given point to that reflected from surrounding surfaces.” This was first demonstrated by Edwin Land, a distinguished optical scientist better known for inventing Polaroid. His experimental setup invited the participants to disbelieve their eyes as he placed two identical abstract Piet Mondrian-style prints next to each other, individually lit by three projectors allowing to mix long, middle and short wavelengths in any ratio of brightness. Land proved, that even when when the energy flux that reached the eye when looking at two different rectangles on the paintings was exactly the same, the brain perceived the rectangles as two different colors, depending on their relative lightness as compared to the surrounding rectangles.

We have many areas across the brain that constitute “the visual brain”, but color is autonomous, entirely located in V4 and nowhere else. In terms of retina input, V4 is concerned only with calculating ratios! This is why color perception in the general population is relatively coarse and has many fewer cells than the networks responsible for determining form or spacial location, Cytowic explains. An even more curious fact is that the brain doesn’t perceive color, form and motion simultaneously but sequentially. We first perceive color, 100 milliseconds later we detect motion and only later do we discern form. These asynchronous attributes are then constructed into a simultaneous experience, another example of constructing reality.

These fantastic discoveries have prompted Simon to come up with some nifty visualizations.

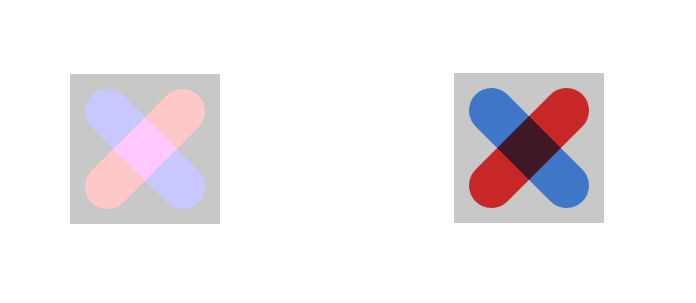

In this first visualization, he was playing with the concept of blending modes that I have shared from one of my motion design courses and combined it with an experiment to “fool the eye”. The image on the left is static. The image on the right changes, depending on the blending mode. Does the grey background behind the cross also change? Why do you see it change?

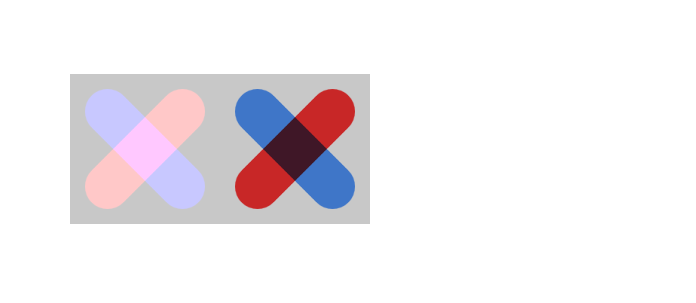

The grey background actually remains the same, but its ratio to the red and the blue of the cross changes, thus fooling us into thinking it changes, too. Do the images below reveal the same grey background or two different shades of grey?

Answer: they are the same!

Simon and Neva have also tried bringing “impossible” colors into the visible world by creating little JavaScript sketches that allowed the viewer to see the so-called stygian and self-luminous hues. These “chimerical” colors are imaginary and can be seen temporarily after staring at a strong color for about 10 seconds. In the example below, keep staring at the cross inside the yellow circle. You will eventually see an “afterimage” against the black background that will be simultaneously dark and impossibly saturated: stygian blue. No physical object can have this color, it is impossible to achieve through normal vision. The current explanation for this effect is cone fatigue (some of the cones in your retina become tired and non-responsive, changing your perception).

An even more mesmerizing effect can be achieved by staring at green on a white background. The afterimage, self-luminous red, seems glowing and brighter than white:

When I was exploring how an object’s appearance changes depending on its speed and acceleration (squash and stretch in animation), Simon showed me how at relativistic speeds, there’re effects in the visible world that we don’t perceive. For example, had we been able to register this effect, an object would appear bending towards us while moving in the direction of our eyes. Light reaches the center of such an object earlier than its ends, so it would feel that the center is further away because we would see it first.

It takes a trillion frames per second to perceive the speed of light, Simon told me, so our brains cannot perceive this relativistic effect at the speed we shoot nerve impulses from one cell to another (one in 0.5 to 1 millisecond). Below is Simon’s visualization in slow motion (the green stripe is the object as it would be perceived by the observer, represented by an orange circle).

When I was practicing the ball bounce in Animate, Simon also built a ball bounce visualization trying to depict all the squashing and stretching on its surface. On a microscopic level, this might be literally how much there is going on on the surface of a ball’s material 🤪

And one more recurring topic in all graphic and motion design software is, of course, their majesty The Bézier Curve. Simon had already made a Bézier editor before and this time around, he came up with the formulae in GeoGebra visualizing how the curve is actually traced by the software:

Simon’s earlier Bézier editor project:

Simon’s code: https://editor.p5js.org/simontiger/sketches/r4gW2mgIo

An impression of Simon’s earlier project Color Palette Generator in p5.JS:

Simon’s code: https://editor.p5js.org/simontiger/sketches/MVVT1T01n

Simon’s CSS color encyclopedia:

Conclusion: there is more computation in color perception and working with color than you might think, and there’re more colors than you can imagine.