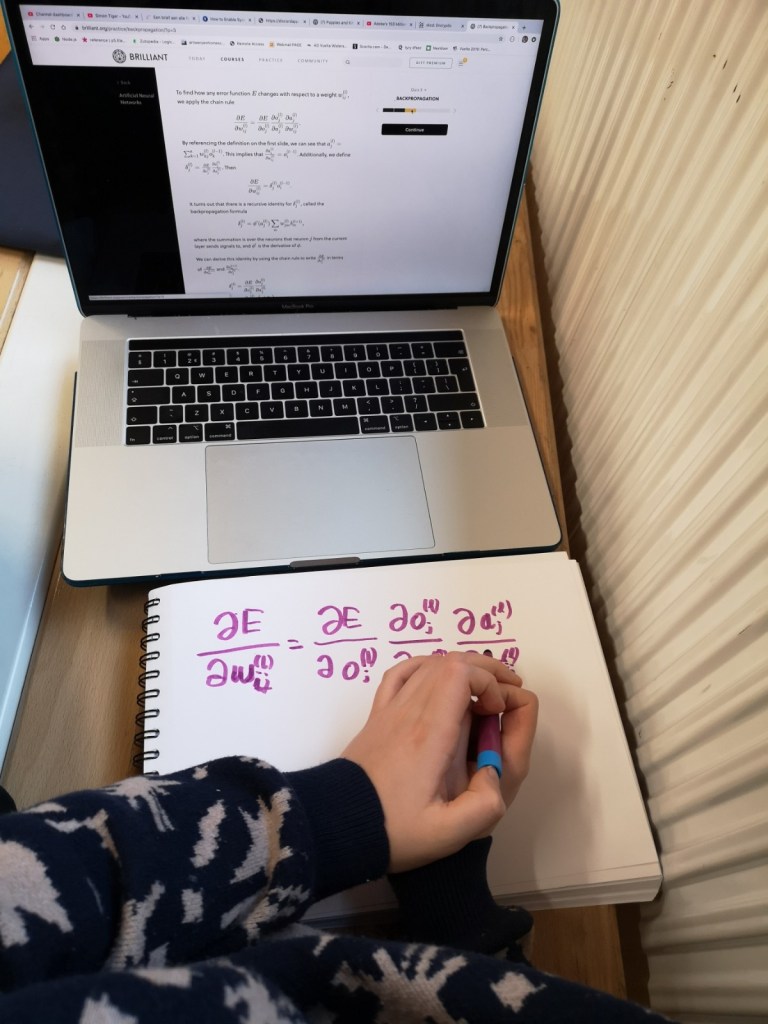

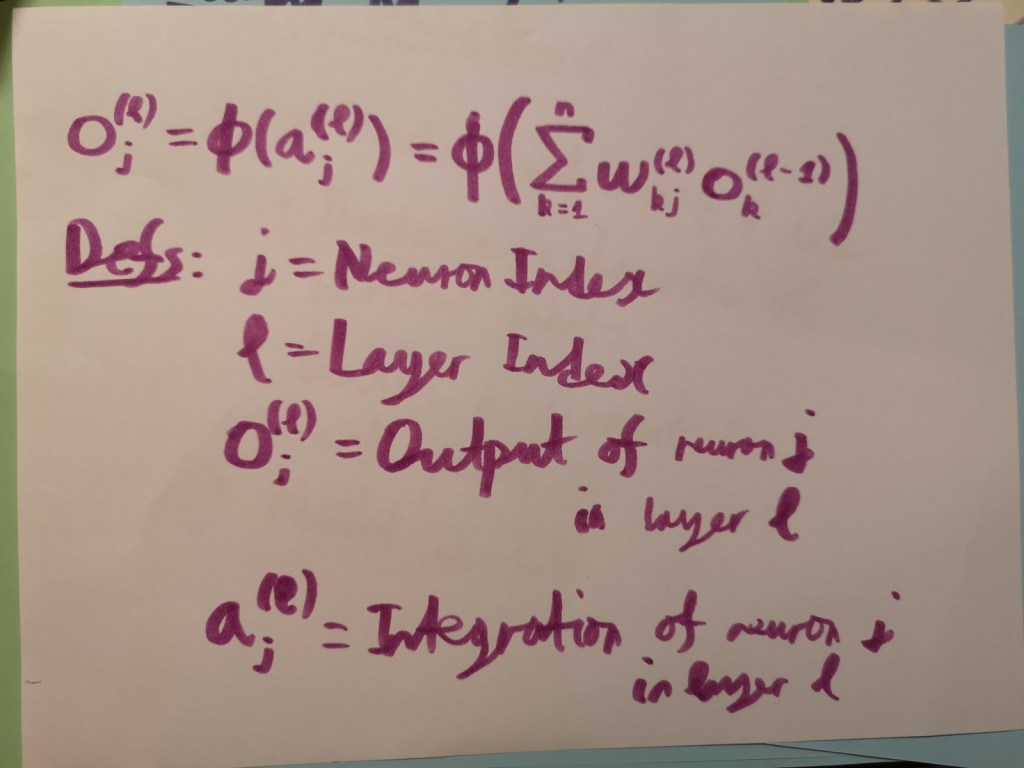

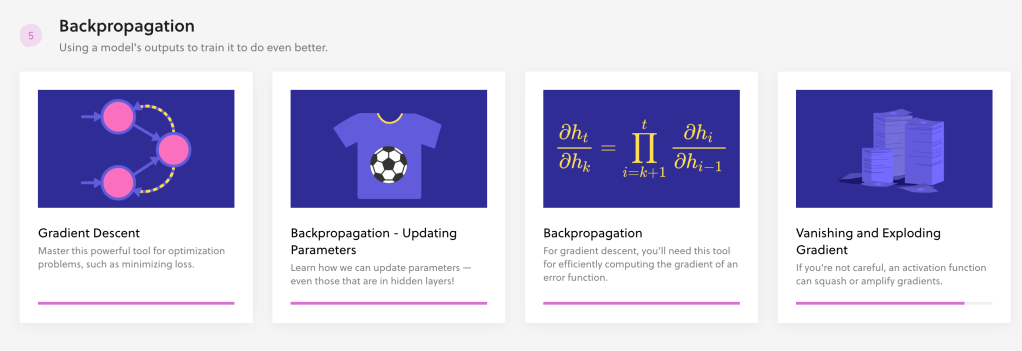

A little over a month ago, Simon picked up neural networks again (something he had tried a while ago but couldn’t grasp intuitively). He started the Artificial Neural Networks course on Brilliant.org and covered vectors, matrices, optimisation, perceptrons and multilayer perceptrons fairly quickly and even built his first perceptron in Python from scratch (will publish a video about this project shortly). As soon as he reached the chapter on Backpropagation, however, he realised his current knowledge of Calculus wasn’t enough. This is how Simon, completely on his own, decided to get back to studying Calculus (something he lost interest in last year). After gulping up several chapters of the Calculus Fundamentals course, Simon told me he was now ready to do Backpropagation (nearly done now). On to the convolutional neural networks (the next chapter in the course)!

As of today, these are his progress stats:

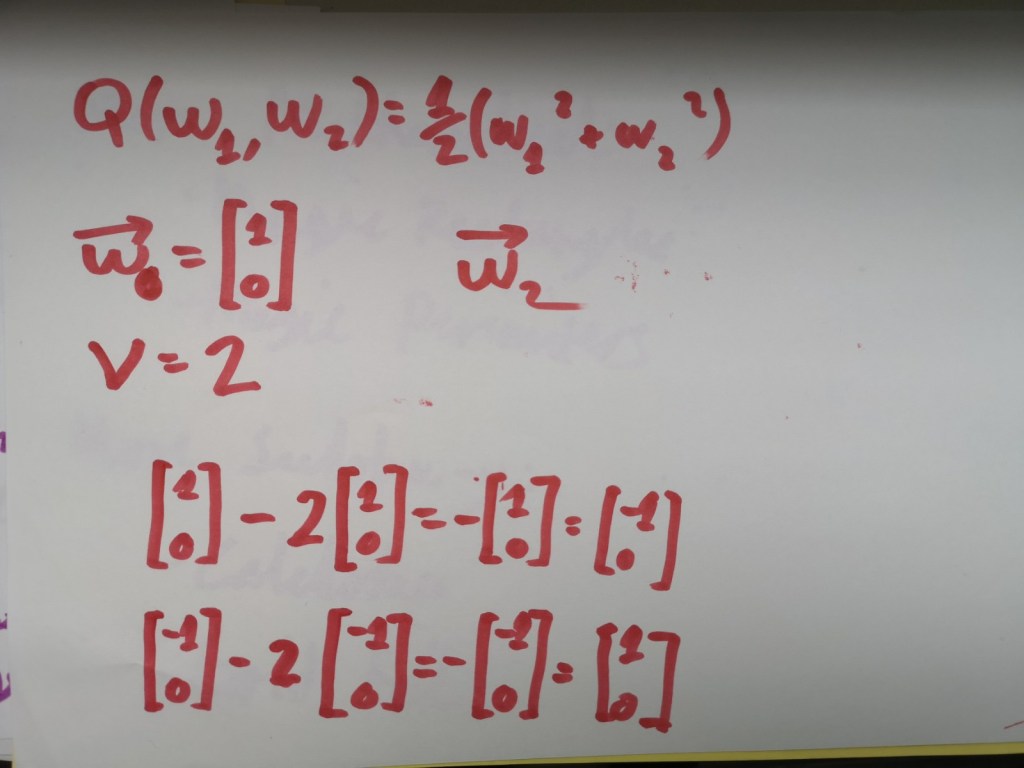

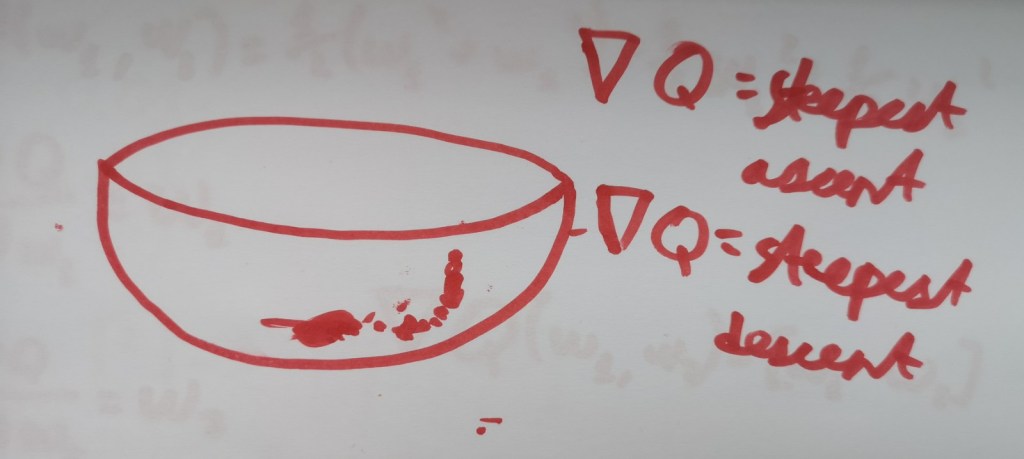

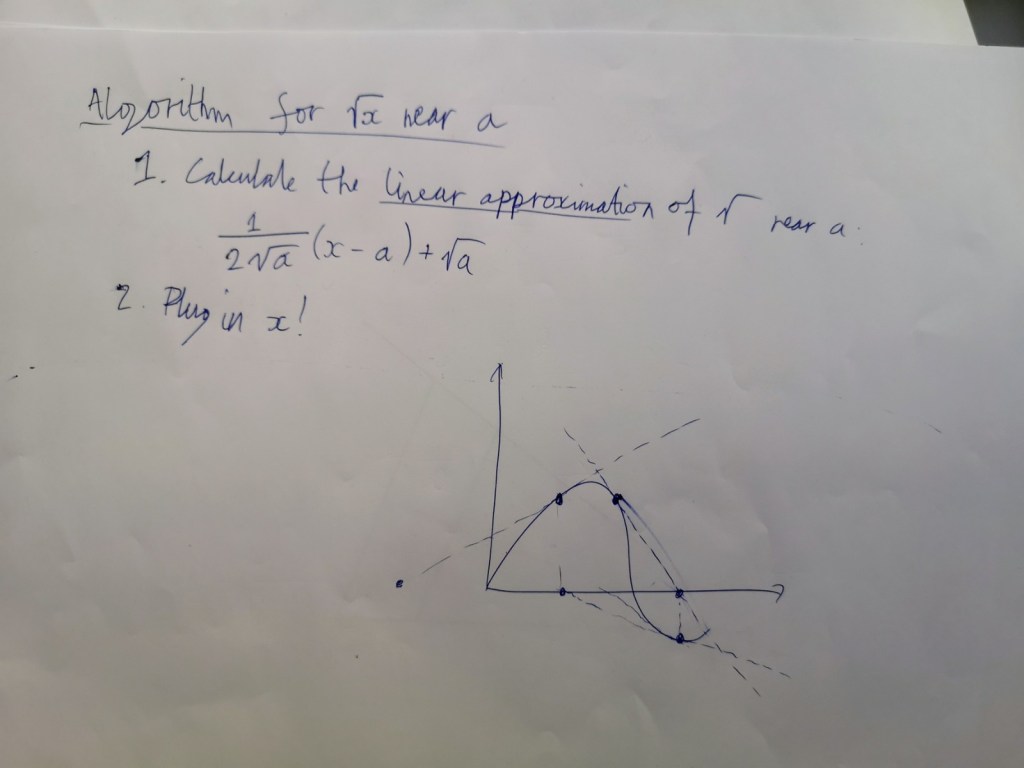

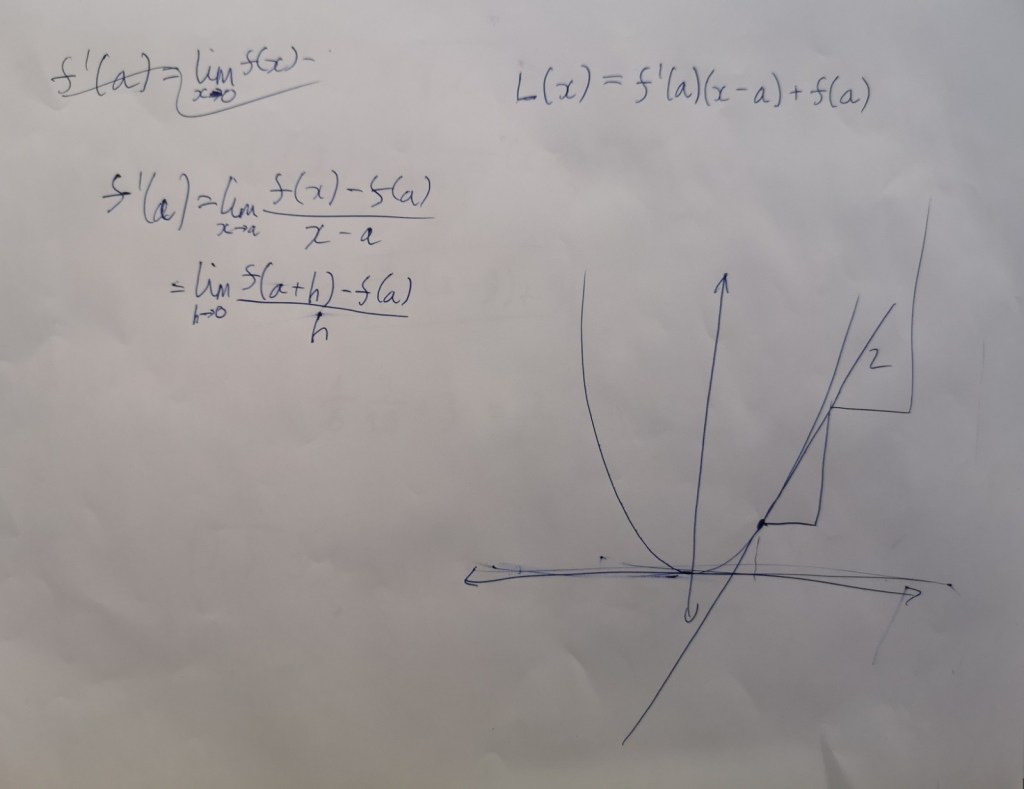

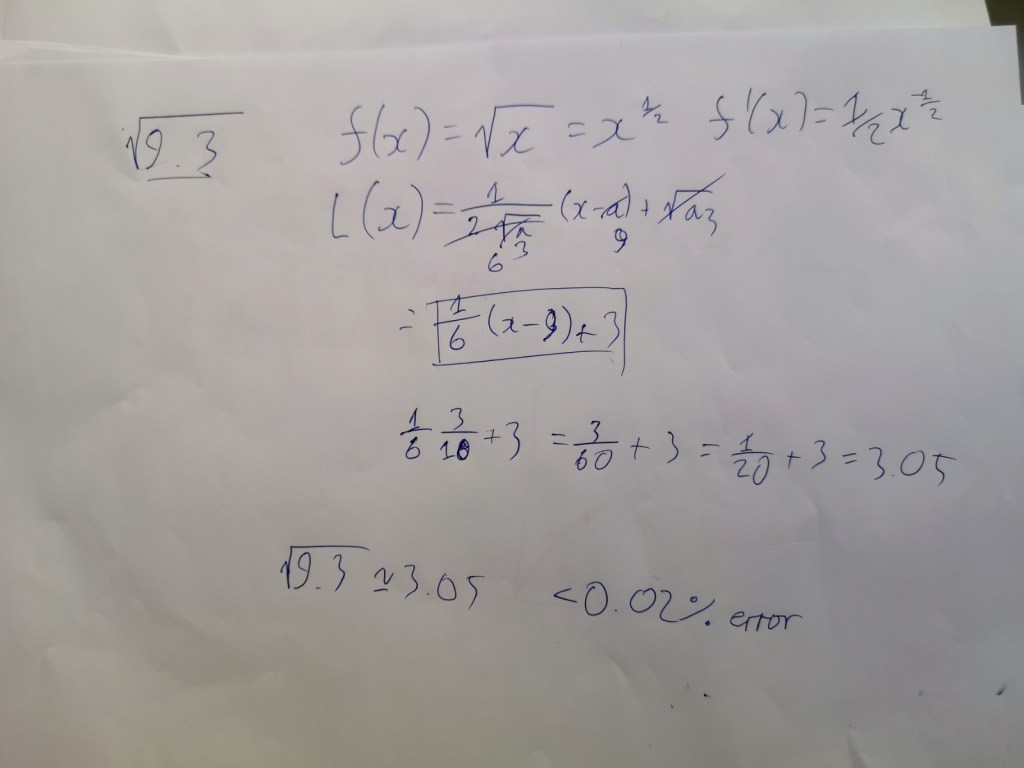

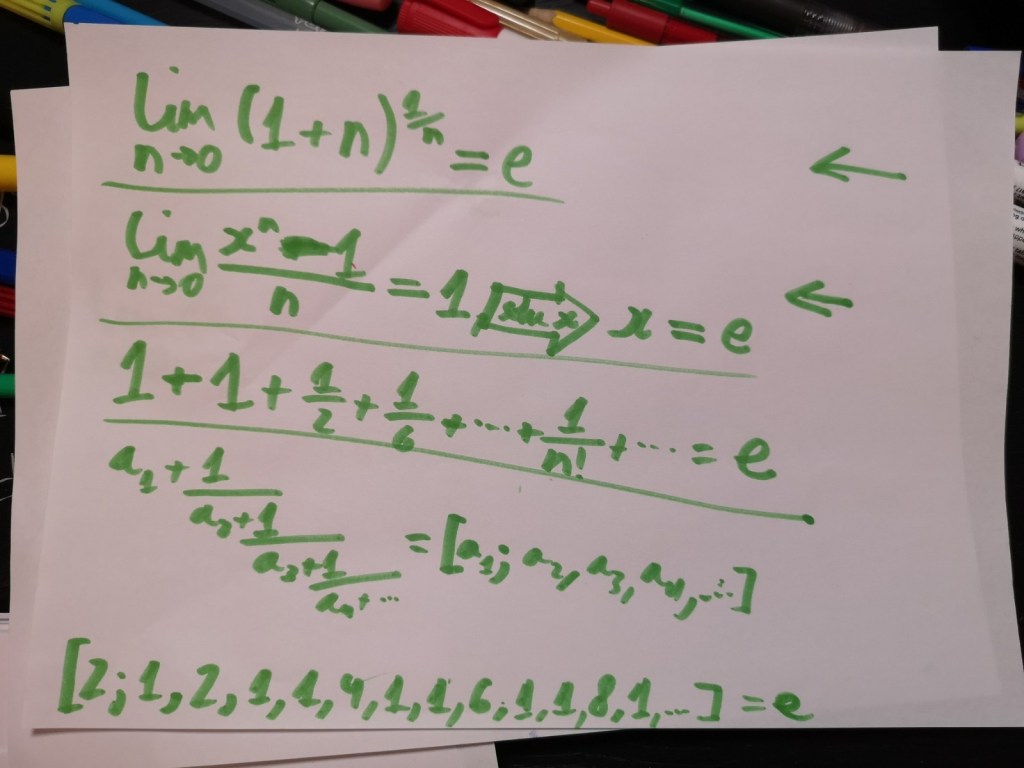

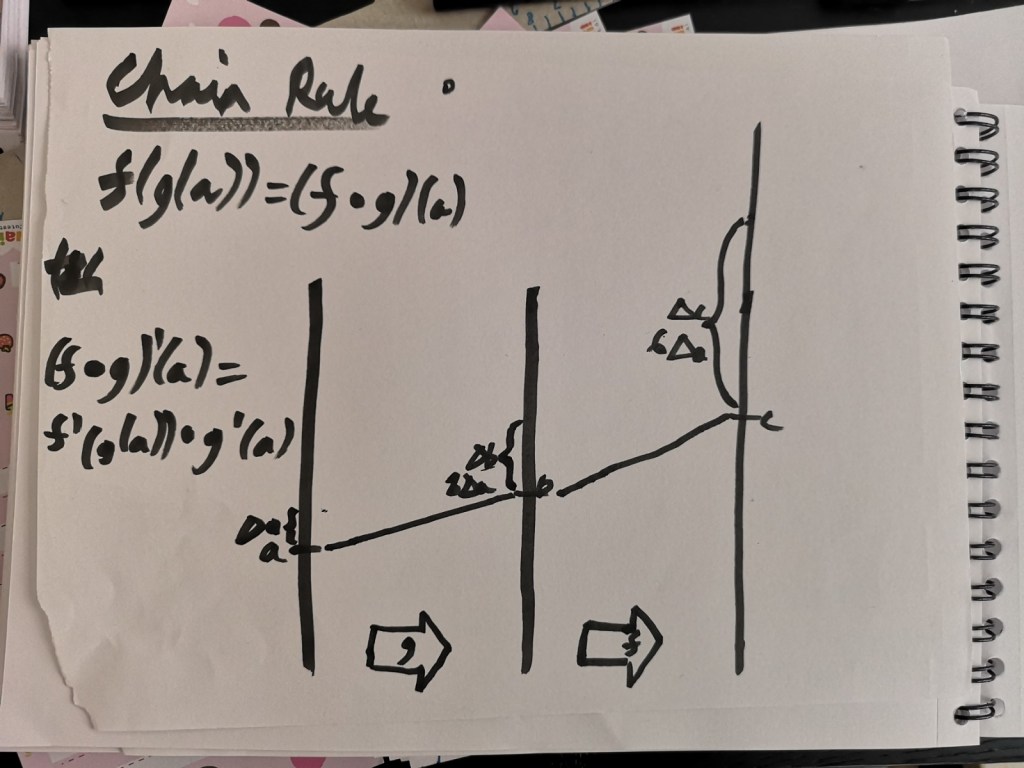

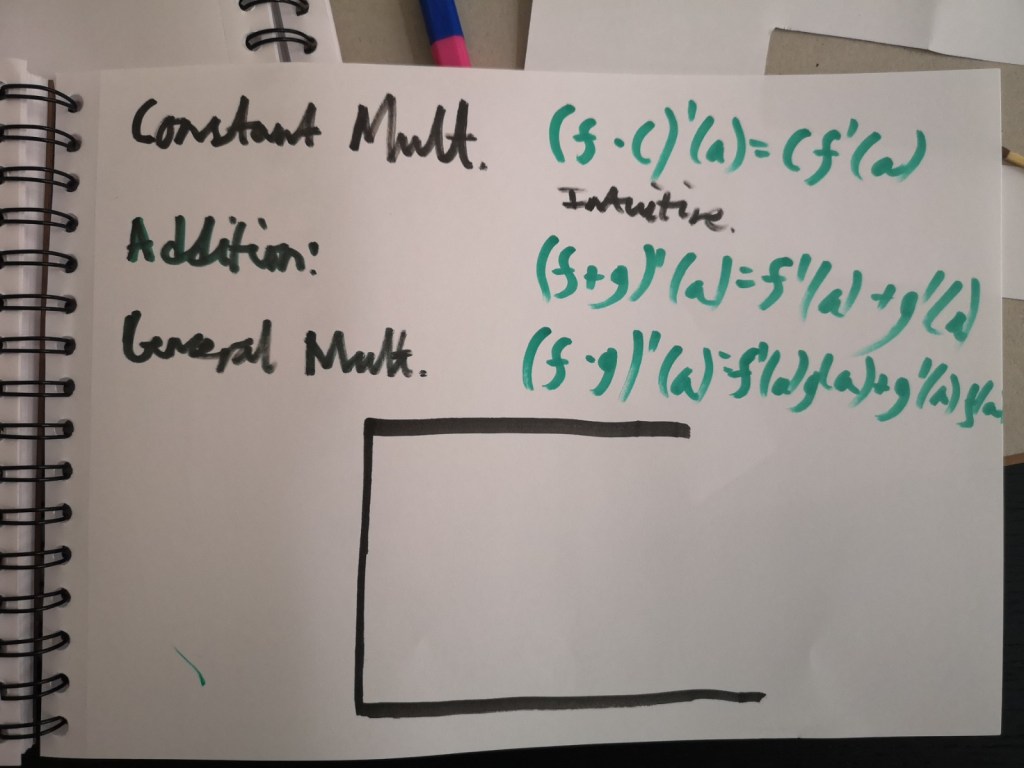

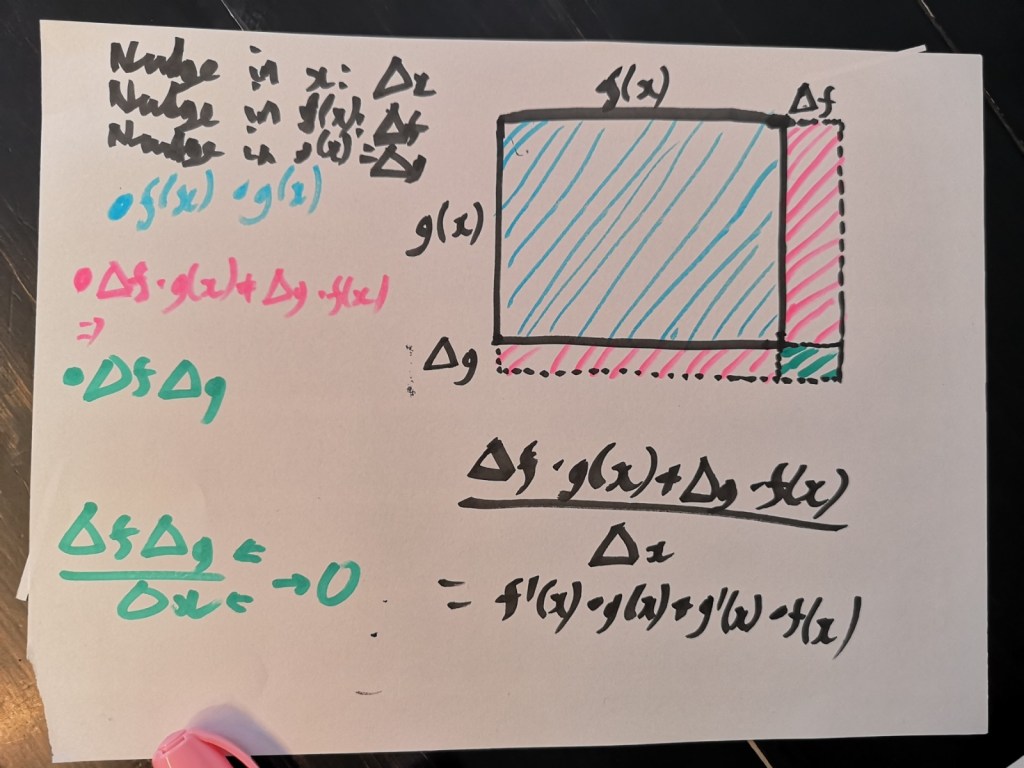

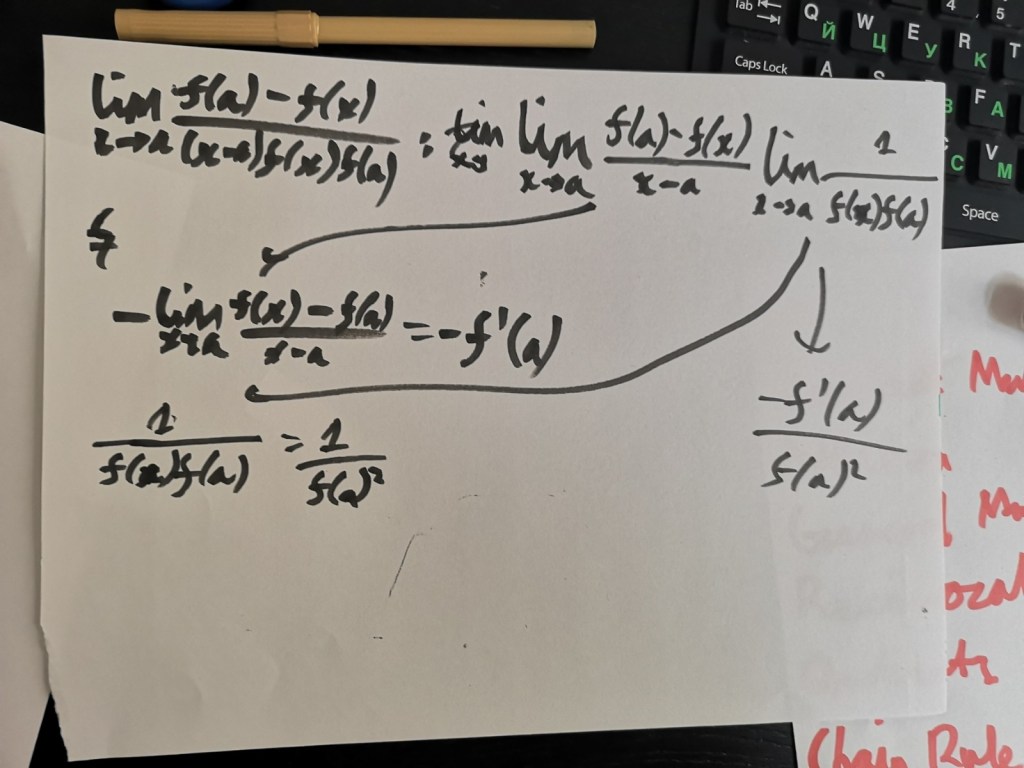

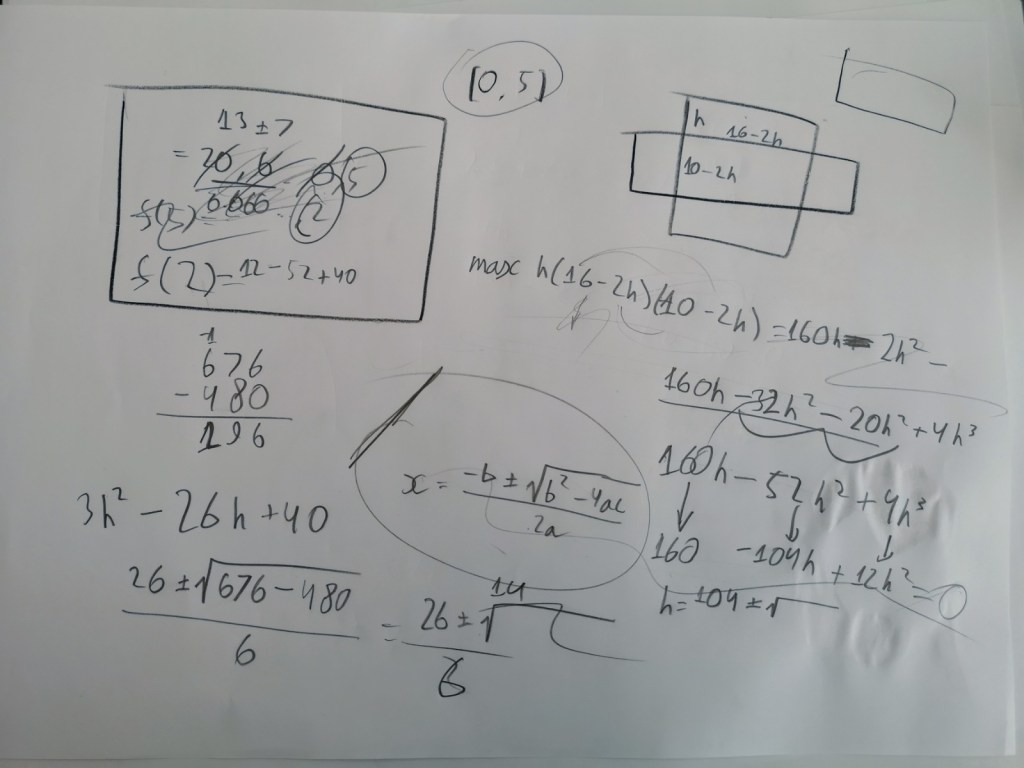

Below are some impressions of doing Calculus Fundamentals.