Simon has been working on a very complicated topic for the past couple of days: Linear Regression. In essence, it is the math behind machine learning.

Simon was watching Daniel Shiffman’s tutorials on Linear Regression that form session 3 of his Spring 2017 ITP “Intelligence and Learning” course (ITP stands for Interactive Telecommunications Program and is a graduate programme at NYU’s Tisch School of the Arts).

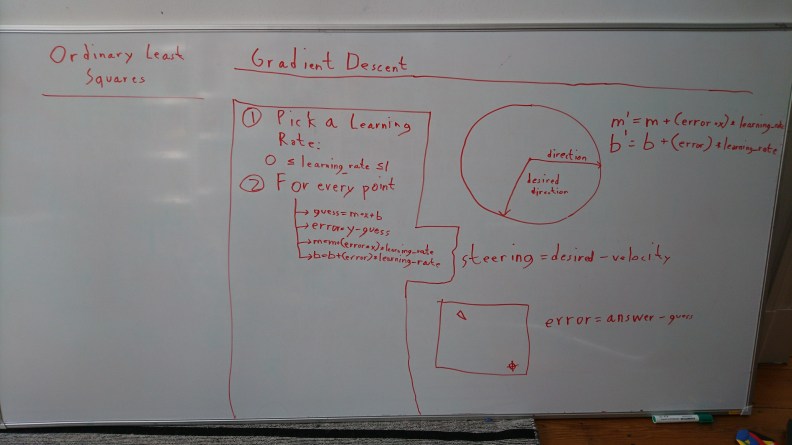

Daniel Shiffman’s current weekly live streams are also largely devoted to neural networks, so in a way, Simon has been preoccupied with related stuff for weeks now. This time around, however, he decided to make his own versions of Daniel Shiffman’s lectures (a whole Linear Regression playlist), has been busy with in-camera editing, and has written a resume of one of the Linear Regression tutorials (he actually sat there transcribing what Daniel said) in the form of an interactive webpage! This Linear Regression webpage is online at: https://simon-tiger.github.io/linear-regression/ and the Gragient Descent addendum Simon made later is at: https://simon-tiger.github.io/linear-regression/gradient_descent/interactive/ and https://simon-tiger.github.io/linear-regression/gradient_descent/random/

And here come the videos from Simon’s Liner Regression playlist, the first one being an older video you may have already seen:

Here Simon shows his interactive Linear Regression webpage:

A lecture of Anscombe’s Quartet (something from statistics):

Then comes a lecture on Scatter Plot and Residual Plot, as well as combining Residual Plot with Anscombe’s Quartet, based upon video 3.3 of Intelligence and Learning. Simon made a mistake graphing he residual plot but corrected himself in an addendum (end of the video):

Polynomial Regression:

And finally, Linear Regression with Gradient Descent algorithm and how the learning works. Based upon Daniel Shiffman’s tutorial 3.4 on Intelligence and Learning: